CAPTCHAs are everywhere, ready to test your intelligence and patience. They pop up unexpectedly at the worst times. CAPTCHAs can appear when you’re accessing a Gmail account, web page, online bank account, or any content online. These programmed mechanisms outdo the intelligence of bots by challenging them to a test. Most tests are beyond the bots’ intelligence, and only humans can resolve them.

What Is the Use of CAPTCHAs on Websites?

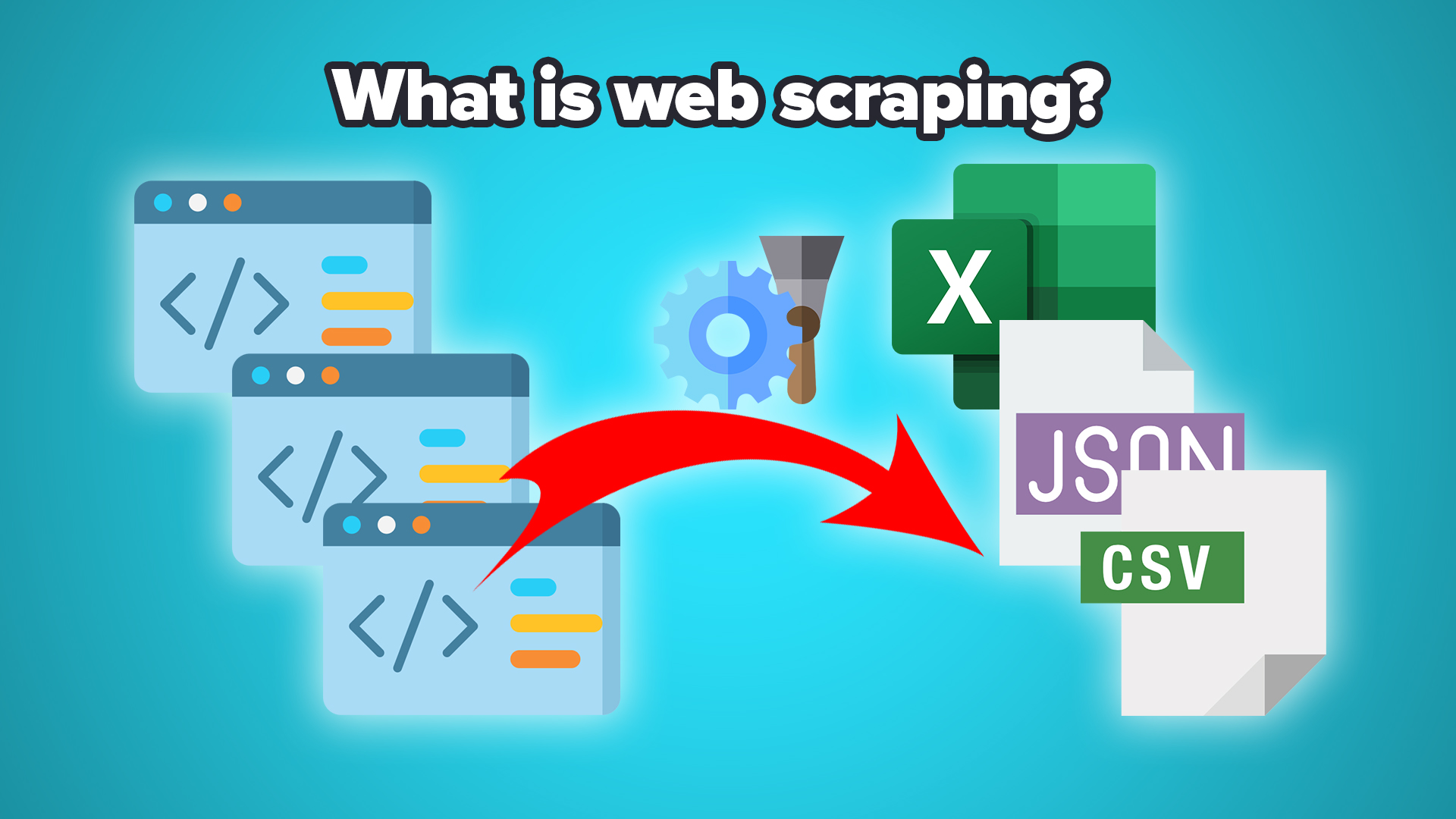

Websites use CAPTCHAs as a way to drive away false traffic. The intelligence built within code enables them to tell humans from bots. Humans click on ads and make purchases, while bots don’t. Instead, bots can infect the websites with DDoS attacks and malware, ultimately hurting the sites. But then, there are reasonable and legal bots that don’t harm websites but only collect data. Known as scrapers, these bots have grown popular among businesses, content creators, and marketers. Let’s explore some of the options available to get around CAPTCHAs.

Use Proxies to Make Your Bots Look Human

Using CAPTCHA proxies is the most realistic way to avoid CAPTCHAs when web scraping. CAPTCHA proxies generate random IPs, making it possible for the bots to pin the traffic to a particular address. The rotating IPs enable your scraping bots to crawl a website multiple times without the CAPTCHA bots noticing the trends.

CAPTCHA proxies are either from residential or data center proxy providers. By signing up with the CAPTCHA proxy provider, you gain access to a range of global IPs. Understand that data center proxies are a bit cheaper but not the safest options for website scraping. The residential variety is pricier but more secure and reliable. Datacenter proxies will also likely trigger CAPTCHAs, which rarely happens with residential proxies.

Randomize Web Scraper Timing and Behaviors

Web scraping bots send multiple requests within a short period. When that happens, the target website records those requests as unusual traffic. In response, the intelligent website bots will trigger CAPTCHAs. It’s almost impossible to avoid CAPTCHAs when using web scraping bots. However, randomizing the web scraper behaviors and timing can reduce or even prevent CAPTCHA triggers.

Randomizing the time a web scraper spends on a page reduces suspicious activities. The website won’t record the visits by the bots as suspicious, avoiding CAPTCHAs. In addition, you can randomize the movements and activities of the web scraper. Automate form submissions and program the scraper to trigger random time clicks and mouse movements.

Avoid Honeypot CAPTCHAs

Honeypots are simply CAPTCHAs concealed with CSS. When creating web scraping bots, add robust features that enable them to check for CSS elements in pages and websites. Web scraping bots capable of detecting CSS elements and displaying them are less likely to trigger CAPTCHAs.

By detecting CSS elements and turning them on, it becomes easier for the bots to avoid honeypots. Honeypot CAPTCHAs have unique properties and hide behind CSS elements to block the IPs that appear bot-triggered. You can dodge getting your IP address blocked by setting your web scraping tools to avoid interacting with pages confirmed to have honeypots.

Don’t Click on Direct Links

Authoritative sites may have bots that trigger CAPTCHAs when users click on direct links multiple times. These websites have many other smaller websites linking to them. The CAPTCHA defense mechanisms used by these websites protect against false and bot-generated traffic.

An easy way to bypass CAPTCHA defense systems triggered by direct links is using the referrer header. When you use the header, you deceive the bots into believing you’re sending genuine requests.

Conclusion

When crawling through websites to harvest data for informing important business decisions, you can get trapped in CAPTCHAs. CAPTCHAs are there to differentiate between human and bot activities on a website. The CAPTCHA bots have high intelligence to tell when traffic flowing into a website is not real. Since website scraping tools send multiple requests continuously when harvesting data, the target websites will likely flag them.

Getting your web crawler IP address blocked means that you can’t crawl the website again. However, you can bypass such an issue by using proxies as they generate random IPs, and their use is hard to detect. Understand that free proxies will likely mess with you as they are unstable. These proxies aren’t reliable enough to maintain connections throughout the web scraping activities. In this regard, always go for premium.

You must be logged in to post a comment Login